How a No Code Platform Fostered Collaboration When Developing NLP Models

By Rebecca Hirschfield

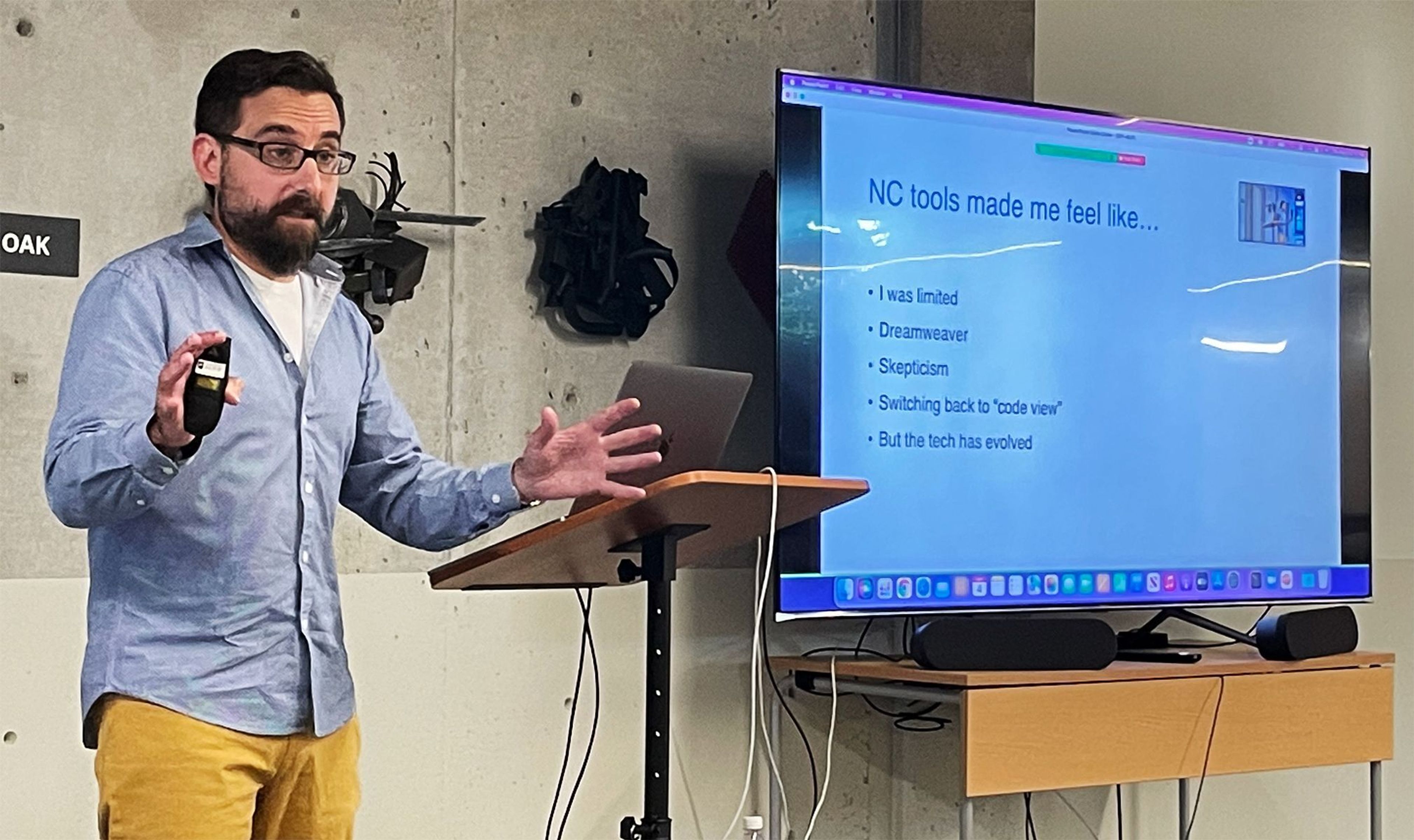

Babel Street recently hosted a gathering of the Boston NLP Group at its Somerville, MA office. The featured topics were Use Cases for Do It Yourself NLP, and Pattern-Based Few-Shot Entity Disambiguation. Marcelo Bursztein, Founder and CEO of Novacene presented the first topic, which focused on using a no code environment for natural language processing (NLP) application development and how it promoted better collaboration across disciplines when building NLP models.

Many developers feel only so-so about no code tools, and Bursztein felt the same way – no code gave him a good start, but only got him part of the way before he had to go back into code. The tools felt limiting, both to his creativity and to his ability to solve problems.

Bursztein talked about the earlier days of DreamWeaver (first released in 1997), when web developers were hand-coding sites. DreamWeaver enabled drag and drop site development, but when the web team looked under the hood, they saw bloated and sloppy HTML. Some people still have this impression of no code tools – that they produce dirty code – but the reality is that the technology has come a very long way.

This was to preface Bursztein's key concepts for his talk: interdisciplinary collaboration, and managing complex projects.

The first point is that today’s technology jobs are highly specialized, yet innovation and discovery are the result of diversified knowledge. This means that technologists will inevitably have to collaborate across disciplines to achieve breakthroughs.

This is particularly true when it comes to developing machine learning systems and NLP models because they fall outside of the methodology for more traditional software development. A typical software project is fairly linear (plan, build, deliver) whereas a machine learning project requires multiple rounds of data collection, reformulating the hypothesis and testing.

A better way of doing this involves collaboration, and this is where Bursztein introduced his case studies. The first involves a project called the Journalism Representation Index (JRI) which offers news outlets and media watchdogs a view into how journalists fare when it comes to representing all the stakeholders in stories with heightened public interest.

JRI asked Novacene to determine how representative a news article is based on who is quoted as a source. For example, if a news article only quotes a city chief of police, then the article might have a one-sided perspective. Whereas if an article quotes the chief of police, an academic expert, a political figure and a celebrity, the news piece would likely be more representative of different views.

JRI asked Novacene to write an NLP model that focused on this representation issue. Novacene decided to use a no code platform to construct a two-step pipeline. The first step was a fine-tuned BERT named entity recognition (NER) model. In the second step, Novacene would do a classification where sources would be categorized according to whether someone was an authority, political figure, celebrity, etc. So, the data scientists built the pipeline and deployed it in an app which allowed journalists to upload news articles and see the results of the algorithm.

The journalists could then provide corrections back to the data science team to retrain the NLP models. This ability empowered the domain experts on both sides to get involved with the testing and improvement of the product, despite being from very different backgrounds.

The team collaboration helped highlight that the initial assumption by the data science team around using a two-step pipeline was too simplistic and didn’t provide a deep enough understanding of the article structure from a journalistic point of view. It evolved to a four-step pipeline where they first extracted a “person” using NER, and then employed source detection and source type classifications to determine whether that person was a quoted source and what kind they were.

The last step in the pipeline, coreference resolution, helped solve problems around when a person was introduced early in the article and quoted later without as much context as to who they are, or if that person was later referred to using pronouns.

The second use of a no code platform came during a project that analyzed open-ended survey responses collected by a public engagement and opinion research unit of the Canadian government. This research group came to Novacene with a stated objective of wanting to understand what people think about various subjects.

The NLP model engineers looked at this request and thought it would require a tool set consisting of text classification, clustering, topic modeling, and sentiment analysis. But the client analyst was asking for a word cloud, when what he really needed was a context-aware term frequency analysis tool.

The data scientists used the no code platform to test some unsupervised NLP models against different data sets to understand the degree to which the models generalized. The quick nature of building and testing the models opened the avenues of discussion that helped build a closer working relationship between the team and the client.

Bursztein provided a powerful conclusion, which was that in both cases the domain experts on the client side expressed their needs within the confines of what they understood to be possible. Their request was “build this for me” rather than asking for a solution that best addressed their problem.

The collaboration achieved through using a no code platform made it possible to discover the desired outcome without dictating how the developer should get there. The exploration of the issue in the no code tool provided opportunities for both sides to gain deeper understanding of each other’s perspectives.